Something is broken here

Or "Something is rotten in the state of Denmark"

how many times have you done this?

This is part one of a three-part exploratory series where I try to describe the problem observed, how reframing some long held assumptions can allow an emergent architecture, and ending with how such a workflow could look in practice.

Over the last decade I could not help but feel that something was wrong in how we handled the process of data modeling in our various initiatives. That there was a hidden friction that I could feel but not quite put my finger on - nor name - between how we modeled our data and how we actually built and delivered.

I have tried to put words to this friction, to understand its nature, where it comes from, what it actually means…

Let me give some examples (non-exhaustive) of the symptoms of rot that I see:

ERD Diagrams are, if even present, woefully out of sync of the actual code base

There is probably not a Conceptual Diagram to be found at all

And if there actually is one, it’s most likely a photo of a whiteboard session some 6-12 months ago posted in your Wiki

There is a backlog item every few sprints saying: Update Diagrams

So, is this a post about me complaining about lack of rigor/discipline in how we document our work? No, not at all. The symptoms above are just that, symptoms of something much more fundamental being broken in how actual work gets done today.

Nor is this a post on which modeling technique, or tooling, to adopt or anything in that vein. It’s about the actual process itself.

So let’s first recap on how the data models were traditionally built:

We would sit down with stakeholders, draw up a high level Conceptual Model, i.e. Customer→places→Order→contains→Products or something similar

This Conceptual Model would be refined in an ERD tool of choice

A Logical Model would be derived from the Conceptual Model

A final Physical Model would be produced for the given target database

For more mature organizations, the initial conceptual model would probably derive Concepts from a Business Glossary, i.e. one level of abstraction even higher…

Note: I refer to this flow or modeling exercises: Conceptual→Logical→Physical as the cascade.

"Everyone has a data model, but some of us have a deliberately designed one. And even better, some of us have our designs documented for future (re)use and reference." — Juha Korpela, Conceptual, Logical, Physical - the Truth(*) About Data Models

This worked quite well for a long time, upfront design, for later implementation, single source of “truth” as it were. It was a process of semantic refinement, and that process mapped neatly with our actual workflow. Waterfall was - regardless of how much we tried to “be agile” - how data work actually was done. we may have adopted agile ceremonies etc, and try to shoehorn these activities into something resembling agile, but the fundamental part of the cascade was for all intents and purposes a waterfall process.

But in the last decade or so, actual agile processes have made their entry into how data workflows as well. And let me add a heartfelt Finally! to that. It has re-shaped how we deliver, speed to insight, scope of work that can be delivered, and replaced stale ceremonies with pragmatic workflows that actually deliver.

Sadly, one effect of this change, is the introduction of real friction in terms of how to keep our models alive, actually reflecting reality, it has indeed became a real pain point. We have a continuous loop of reactive model artifact maintenance suddenly. which in turn, introduces a paradox.

The Effort Paradox

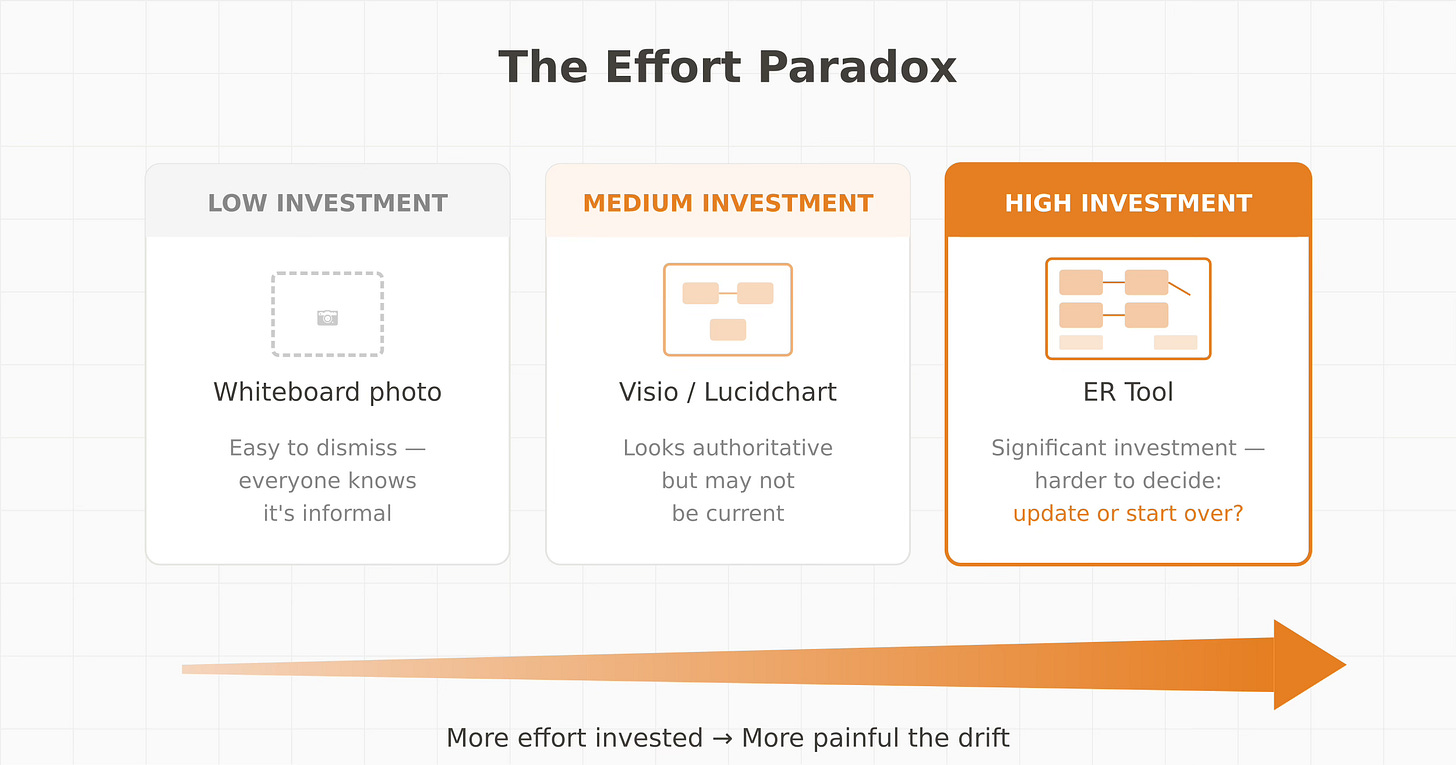

Let’s go back to that whiteboard moment. The photo is there to capture knowledge. Many teams, trying to be better, to have better documentation and processes in place, will try and redraw that photo in Visio, Lucidchart, Excalidraw (shout out to excalidraw for being a beautiful tool I can’t live without btw) or similar. Clean it up, add proper notation, make it look good and official even. This then goes into your Project or Architecture Wiki. Looks good. But it will still drift. You will still need to refresh it, continuously.

Other teams will take this even further, ERWin, ER Studio, SqlDbm… Actual ER tooling, adding days/weeks of effort to get the conceptual model “complete” with attributes and all, do the whole Conceptual→Logical→Physical Cascade. Well documented. Beautiful. And still, it will drift.

The fundamental problem here isn’t lack of effort. It’s the disconnect. Any modeling effort you do today outside your actual code base will drift. It’s not a question of if, but when. Period. And let me add: saying this is a human discipline issue is woefully inadequate. Our workflows are today incentivizing delivery. Continuous delivery is the holy grail. Speed to Delivery is non-optional. So it’s fundamentally a process issue by itself.

There is a certain irony here as well: the more effort you put in to make your documentation and artifacts good, the more painful the drift, and costly the maintenance and catching up said drift becomes:

The tooling isn’t the problem, they are all excellent at what they do. The problem lies in the fact that they are out-of-band, they live outside your actual delivery workflow. As long as your model artifacts live in a separate tool, with a separate cadence, coupled with how changes work today in our workstreams, drift will occur.

To be clear, I find the core of this issue to be structural, not one of discipline. Discipline implies something lacking in the people — but our workflows today are built on automation, quality gates, parallel delivery. There's no space for "time out" for upfront design. The work happens within the iteration, on the piece of the system you're touching right now.

So, is that it then? our current issues are born solely from us getting better, more up to date tooling, and our processes having matured so we can deliver at speed? Well, in my opinion no. It is certainly a foundational part of the underlying issue, but there are other factors that can help us explain how we got here. One thing that deserves to be kept in mind in my opinion, is the genesis of modern data engineering and Big Data movement happening just around the start of this decay.

When the term Data Engineer was coined, it was in many ways a conscious break of the traditional titles such as BI/ETL/DWH Developer etc. They were the New Kids on the Block. Python/Scala first, Hadoop. Schema-on-read. Software principles first, and adequate tooling to do so. We don’t need no upfront data modeling.

And credit where it’s due: they were right about most of it. The data world at that point was slow, heavyweight, and really hard to push forward. Our processes were arcane, manual, and really difficult to operate at scale. We were way behind anything resembling modern engineering practices in our delivery. They brought automation, new paradigms such as separation of compute and storage, streaming, rich orchestration options. These were real advances. Really. It brought the data industry as a whole more or less kicking and screaming into the 21st century. We owe them some serious gratitude for all of this.

But here is the thing: the classic case of the baby going out with the bathwater comes around again. In their rebellion against the old guard, Data Modeling was treated just as obsolete as the rest of it. So we now have a whole new generation of Data Engineers with strong engineering chops, yet weak modeling skills. It is not part of the curriculum, the standard entry to the field no longer cares for this aspect. Current curriculum is too often just a list of tools, and if you’re lucky, patterns. Note: I am speaking towards a trend here, and doing so in extremely broad strokes.

And one more point worth adding to this is the generational shift also happening right now in the field: A lot of the people who do carry the conceptual knowledge, even if only in their heads, are retiring, moving on or simply spread too thin. The tribal knowledge is walking out the doors in an ever increasing pace.

This has a compound effect over time:

implicit knowledge leaving,

tenure is shorter, less time to accumulate institutional knowledge, less incentive to document what you won’t be around to maintain

the feedback loop is slow, bad modeling can take years before the hurt is felt, and by that time chances are the people who caused it (and could learn from it) are already gone

The skill atrophied at exactly the moment the knowledge holders started exiting.

And that in my view is quite clearly an actual structural vulnerability in how organizations operate their data work.

I am not the first, and probably won’t be the last, to lament the “Lost Art of Data Modeling”. Especially these days when AI is pushing everyone to improve their models, their semantics and beyond :) It was quite interesting to read other people’s perspectives on this, as I did my research on this topic before writing this. I’ll leave you with two insightful articles and quotes:

from Bill Coulam’s work at datasherpa:

“Since about 2011, everywhere I’ve been engaged to introduce good data architecture, they have little to no documentation and haven’t seen data modeling done in at least a decade.”

— datasherpa.blog, The Lost Art and Science of Data Modeling

And from Chad Sanderson’s brilliant article, which I highly recommend to read:

”Data modeling appears to be a forgotten language, spoken only by the most ancient data architects shrouded in robes and hunched over a tattered copy of Corporate Information Factory, reading by candlelight."

— Chad Sanderson, The Death of Data Modeling

And of course Juha Korpela’s post linked earlier, I could add many more, but I think the point has been made. The observation is shared, and needs to be called out more.

Now, I earlier said that this started with Agile. How did the software world handle this same tension? It's an interesting parallel, after all. Enterprise Architecture faced the exact same pressures - continuous delivery, faster feedback loops, less time for upfront design and documentation. And they did adapt: C4 diagrams and tooling, UML and similar artifacts only kept on the higher level of abstractions, living documentation close to the codebase and codebase seen as the source of truth. They kept the architectural principles, while dropping the heavyweight processes.

"This 'evolvability' – the ability for architecture to be changed or evolved over time – is becoming critical. There are many reasons for this: the increasingly fast pace of the industry; adoption of Agile approaches at scale; the cloud-first nature of much new development..." — Open Agile Architecture

We as data practitioners are still struggling with this: living documentation, architectural artifacts that stay current with the actual codebase. The cascade — Conceptual→Logical→Physical — is still very much alive. But we made it implicit, hidden, undocumented. It now lives only as tribal knowledge and undocumented assumptions, alongside a continuously out of sync wiki.

Excellent analysis. Really resonated

Brilliant framing on the structural disconnect between delivery workflow and model maintenace. The comparison to software architecture's evolution (C4, living docs near codebase) is spot on, we're stuck treating models as separate artfacts when they should be first-class citizens in the pipeline. I've seen similar pain in teams where the 'update diagrams' ticket sits forever becuase there's no clear home for it in sprint commitments.